Marco-MT Ranked First at WMT 2025

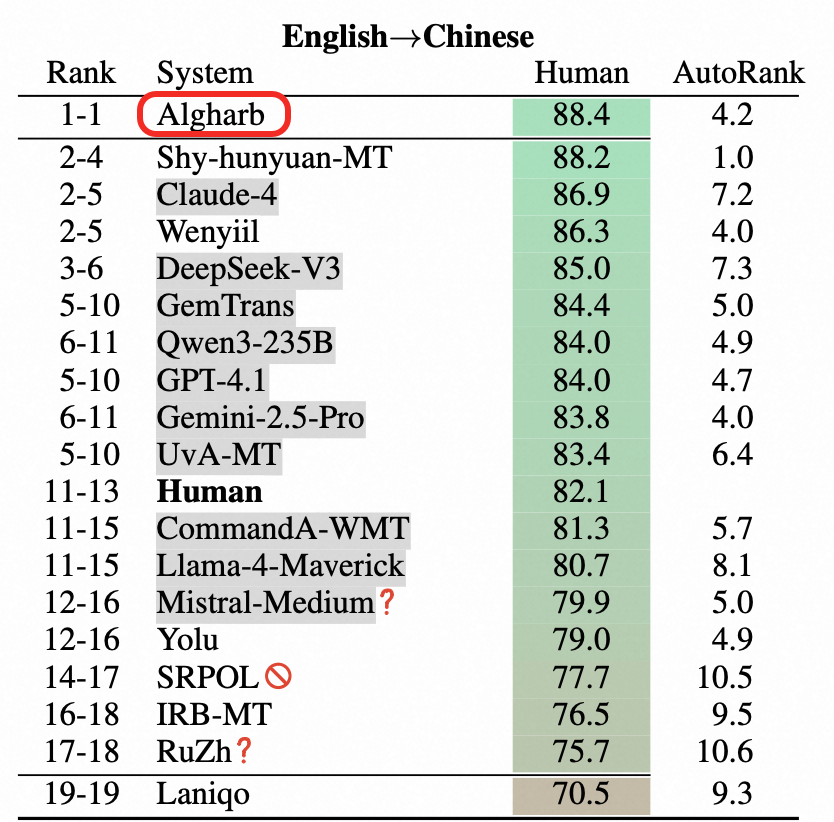

From November 5 to 9, 2025, the 20th Workshop on Machine Translation (WMT 2025) was held in Suzhou, China. Alibaba International’s team proudly showcased Marco-MT, its cutting-edge large language translation model, at the event. Building on outstanding results from the WMT competition, the team highlighted a landmark achievement: Marco-MT ranked #1 in English-to-Chinese translation, outperforming not only leading AI systems such as Claude-4 and GPT-4.1, but also surpassing human translators in evaluation metrics. This breakthrough underscores Marco-MT’s exceptional capability and reliability in general-purpose translation tasks, setting a new benchmark for machine translation quality.

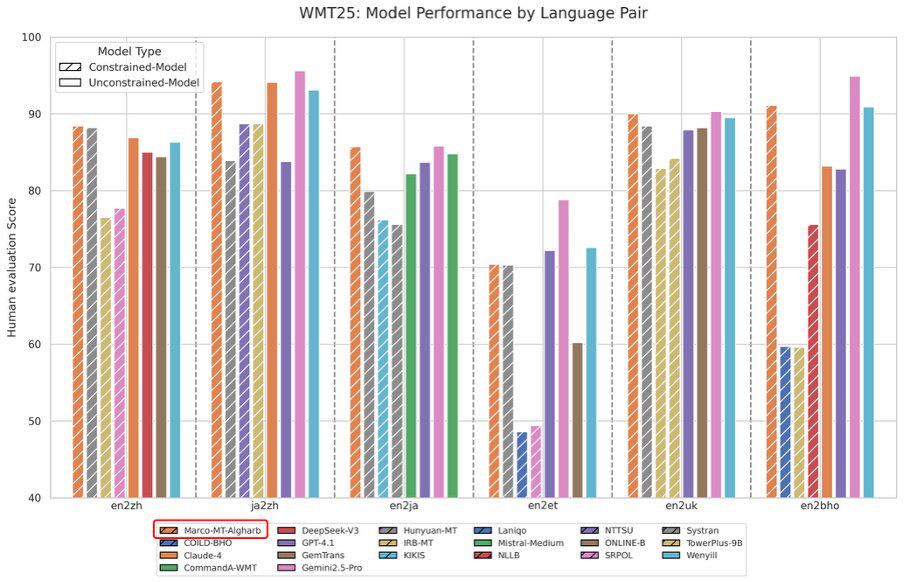

Excellent model performance by all the language pairs

Technical report for Marco-MT translation capability

Marco MT won first in English→Chinese translation

Among 13 language pairs we competed in, Maroc-MT-Algharb achieves:

🏆 6 First Places

🏆 4 Second Places

🏆 2 Third Places

We did this with key innovations:

-

Novel M2PO translation paradigm

-

Two-stage SFT + CPO+MPO reinforcement learning

-

Hybrid decoding with word alignment & MBR

With continued investment in our large language translation models and deeper collaboration with cross-border e-commerce platforms, Alibaba International team is confident in our ability to further refine the LLMs — driving AI-powered innovation that accelerates global e-commerce growth, enhances profitability, and unlocks significant operational efficiencies.